Before we start: in the spirit of the mid-2000s, I thought I'd have a go at blogging about events again. I've realised I miss the way that blogging and reading other people's posts from events made me feel part of a distributed community of fellow travellers. Journal articles don't have the same effect (they're too long and jargony for leisure readers, assuming they're accessible outside universities at all), and tweets are great for connecting with people, but they're very ephemeral. Here goes…

On September 3 I was at BBC Broadcasting House for 'AI, Society & the Media: How can we Flourish in the Age of AI?' by BBC, LCFI and The Alan Turing Institute. Artificial intelligence is a hot topic so it was a sell-out event. My notes are very partial (in both senses of the word), and please do let me know if there are errors. The event hashtag will provide more coverage: https://twitter.com/hashtag/howcanweflourish.

The first session was 'AI – What you need to know!'. Matthew Postgate began by providing context for the BBC's interest in AI. 'We need a plurality of business models for AI – not just ad-funded' – yes! The need for different models for AI (and related subjects like machine learning) was a theme that recurred throughout the day (and at other events I was at this week).

Adrian Weller spoke on the limitations of AI. It's data hungry, compute intensive, poor at representing uncertainty, easily fooled by adversarial examples (and more that I missed). We need sensible measures of trustworthiness including robustness, fairness, protection of privacy, transparency.

Been Kim shared Google's AI principles: https://ai.google/principles She's focused on interpretability – goals are to ensure that our values are aligned and our knowledge is reflected. She emphasised the need to understand your data (another theme across the day and other events this week). You can an inherently interpretable machine model (so it can explain its reasoning) or can build an interpreter, enabling conversations between humans and machines. You can then uncover bias using the interpreter, asking what weight it gave to different aspects in making decisions.

Jonnie Penn (who won me with an early shout out to the work of Jon Agar) asked, from where does AI draw its authority? AI is feeding a monopoly of Google-Amazon-Facebook who control majority of internet traffic and advertising spend. Power lies in choosing what to optimise for, and choosing what not to do (a tragically poor paraphrase of his example of advertising to children, but you get the idea). We need 'bureaucratic biodiversity' – need lots of models of diverse systems to avoid calcification.

Kate Coughlan – only 10% of people feel they can influence AI. They looked at media narratives re AI on axes of time (ease vs obsolescence), power (domination vs uprising), desire (gratification vs alienation), life (immortality vs inhumanity). Their survey found that each aspect was equally disempowering. Passivity drives negative outcomes re feelings about change, tech – but if people have agency, then it's different. We need to empower citizens to have active role in shaping AI.

The next session was 'Fake News, Real Problems: How AI both builds and destroys trust in news'. Ryan Fox spoke on 'manufactured consensus' – we're hardwired to agree with our community so you can manipulate opinion by making it look like everyone else thinks a certain way. Manipulating consensus is currently legal, though against social network T&S. 'Viral false narratives can jeopardise brand trust and integrity in an instant'. Manufactured outrage campaigns etc. They're working on detecting inorganic behaviour through the noise – it's rapid, repetitive, sticky, emotional (missed some).

One of the panel questions was, would AI replace journalists? No, it's more like having lots of interns – you wouldn't have them write articles. AI is good for tasks you can explain to a smart 16 year old in the office for a day. The problematic ad-based model came up again – who is the arbiter of truth (e.g. fake news on Facebook). Who's paying for those services and what power does it give them?

This panel made me think about discussions about machine learning and AI at work. There are so many technical, contextual and ethical challenges for collecting institutions in AI, from capturing the output of an interactive voice experience with Alexa, to understanding and recording the difference between Russia Today as a broadcast news channel and as a manipulator of YouTube rankings.

Next was a panel on 'AI as a Creative Enabler'. Cassian Harrison spoke about 'Made By Machine', an experiment with AI and archive programming. They used scene detection, subtitle analysis, visual 'energy', machine learning on the BBC's Redux archive of programmes. Programmes were ranked by how BBC4 they were; split into sections then edited down to create mini BBC4 programmes.

Kanta Dihal and Stephen Cave asked why AI fascinates us in a thoughtful presentation. It's between dead and alive, uncanny (and lots more but clearly my post-lunch notetaking isn't the best).

Anna Ridler and Amy Cutler have created an AI-scripted nature documentary (trained on and re-purposing a range of tropes and footage from romance novels and nature documentaries) and gave a brilliant presentation about AI as a medium and as a process. Anna calls herself a dataset artist, rather than a machine learning artist. You need to get to know the dataset, look out for biases and mistakes, understand the humanness of decisions about what was included or excluded. Machines enact distorted versions of language.

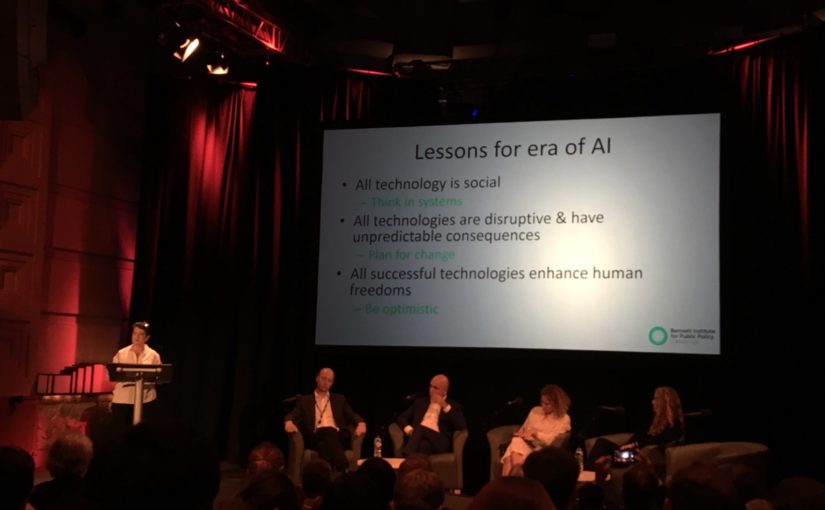

I don't have notes from 'Next Gen AI: How can the next generation flourish in the age of AI?' but it was great to hear about hackathons where teenagers could try applying AI. The final session was 'The Conditions for Flourishing: How to increase citizen agency and social value'. Hannah Fry – once something is dressed up as an algorithm it gains some authority that's hard to question. Diane Coyle talked about 'general purpose technologies', which transform one industry then others. Printing, steam, electricity, internal combustion engine, digital computing, AI. Her 'lessons for the era of AI' were: all technology is social; all technologies are disruptive and have unpredictable consequences; all successful technologies enhance human freedoms', and accordingly she suggested we 'think in systems; plan for change; be optimistic'.

Konstantinos Karachalios called for a show of hands re who feels they have control over their data and what's done with it? Very few hands were raised. 'If we don't act now we'll lose our agency'.

I'm going to give the final word to Terah Lyons as the key takeaway from the day: 'technology is not destiny'.

I didn't hear a solution to the problems of 'fake news' that doesn't require work from all of us. If we don't want technology to be destiny, we all need pay attention to the applications of AI in our lives, and be prepared to demand better governance and accountability from private and government agents.

(A bonus 'question I didn't ask' for those who've read this far: how do BBC aims for ethical AI relate to the introduction compulsory registration to access tv and radio? If I turn on the radio in my kitchen, my listening habits aren't tracked; if I listen via the app they're linked to my personal ID).

One thought on “Notes from 'AI, Society & the Media: How can we Flourish in the Age of AI'”