I'm spending a few hours of my Sunday experimenting with 'mapping for humanists' with an art historian friend, Hannah Williams (@_hannahwill). We're going to have a go at solving some issues she has encountered when geo-coding addresses in 17th and 18th Century Paris, and we'll post as we go to record the process and hopefully share some useful reflections on what we found as we tried different tools.

We started by working out what issues we wanted to address. After some discussion we boiled it down to two basic goals: a) to geo-reference historical maps so they can be used to geo-locate addresses and b) to generate maps dynamically from list of addresses. This also means dealing with copyright and licensing issues along the way and thinking about how geospatial tools might fit into the everyday working practices of a historian. (i.e. while a tool like Google Refine can generate easily generate maps, is it usable for people who are more comfortable with Word than relying on cloud-based services like Google Docs? And if copyright is a concern, is it as easy to put points on an OpenStreetMap as on a Google Map?)

Like many historians, Hannah's use of maps fell into two main areas: maps as illustrations, and maps as analytic tools. Maps used for illustrations (e.g. in publications) are ideally copyright-free, or can at least be used as illustrative screenshots. Interactivity is a lower priority for now as the dataset would be private until the scholarly publication is complete (owing to concerns about the lack of an established etiquette and format for citation and credit for online projects).

Maps used for analysis would ideally support layers of geo-referenced historic maps on top of modern map services, allowing historic addresses to be visually located via contemporaneous maps and geo-located via the link to the modern map. Hannah has been experimenting with finding location data via old maps of Paris in Hypercities, but manually locating 18th Century streets on historic maps then matching those locations to modern maps is time-consuming and she suspects there are more efficient ways to map old addresses onto modern Paris.

Based on my research interviews with historians and my own experience as a programmer, I'd also like to help humanists generate maps directly from structured data (and ideally to store their data in user-friendly tools so that it's as easy to re-use as it is to create and edit). I'm not sure if it's possible to do this from existing tools or whether they'd always need an export step, so one of my questions is whether there are easy ways to get records stored in something like Word or Excel into an online tool and create maps from there. Some other issues historians face in using mapping include: imprecise locations (e.g. street names without house numbers); potential changes in street layouts between historic and modern maps; incomplete datasets; using markers to visually differentiate types of information on maps; and retaining descriptive location data and other contextual information.

Because the challenge is to help the average humanist, I've assumed we should stay away from software that needs to be installed on a server, so to start with we're trying some of the web-based geo-referencing tools listed at http://help.oldmapsonline.org/georeference.

Geo-referencing tools for non-technical people

Hannah's been trying georeferencer.org, Hypercities and Heurist (thanks to Lise Summers @morethangrass on twitter) and has written up her findings at Hacking Historical Maps… or trying to. Thanks also to Alex Butterworth @AlxButterworth and Joseph Reeves @iknowjoseph for suggestions during the day.

Yahoo! Mapmixer's page was a 404 – I couldn't find any reference to the service being closed, but I also couldn't find a current link for it.

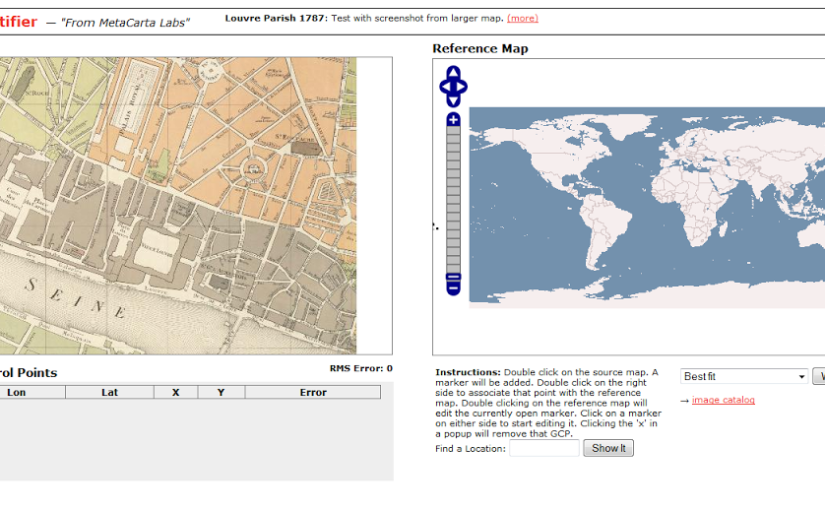

Next I tried Metacarter Labs' Map Rectifier. Any maps uploaded to this service are publicly visible, though the site says this does 'not grant a copyright license to other users', '[t]here is no expectation of privacy or protection of data', which may be a concern for academics negotiating the line between openness and protecting work-in-progress or anyone dealing with sensitive data. Many of the historians I've interviewed for my PhD research feel that some sense of control over who can view and use their data is important, though the reasons why and how this is manifested vary.

|

| Screenshot from http://labs.metacarta.com/rectifier/rectify/7192 |

The site has clear instructions – 'double click on the source map… Double click on the right side to associate that point with the reference map' but the search within the right-hand side 'source map' didn't work and manually navigating to Paris, then the right section of Paris was a huge pain. Neither of the base maps seemed to have labels, so finding the right location at the right level of zoom was too hard and eventually I gave up. Maybe the service isn't meant to deal with that level of zoom? We were using a very small section of map for our trials.

Inspired by Metacarta's Map Rectifier, Map Warper was written with OpenStreetMap in mind, which immediately helps us get closer to the goal of images usable in publications. Map Warper is also used by the New York Public Library, which described it as a 'tool for digitally aligning ("rectifying") historical maps … to match today's precise maps'. Map Warper also makes all uploaded maps public: 'By uploading images to the website, you agree that you have permission to do so, and accept that anyone else can potentially view and use them, including changing control points', but also offers 'Map visibility' options 'Public(default)' and 'Don't list the map (only you can see it)'.

|

| Screenshot showing 'warped' historical map overlaid on OpenStreetMap at http://mapwarper.net/ |

Once a map is uploaded, it zooms to a 'best guess' location, presumably based on the information you provided when uploading the image. It's a powerful tool, though I suspect it works better with larger images with more room for error. Some of the functionality is a little obscure to the casual user – for example, the 'Rectify' view tells me '[t]his map either is not currently masked. Do you want to add or edit a mask now?' without explaining what a mask is. However, I can live with some roughness around the edges because once you've warped your map (i.e. aligned it with a modern map), there's a handy link on the Export tab, 'View KML in Google Maps' that takes you to your map overlaid on a modern map. Success!

Sadly not all the export options seem to be complete (they weren't working on my map, anyway) so I couldn't work out if there was a non-geek friendly way to open the map in OpenStreetMap.

We have to stop here for now, but at this point we've met one of the goals – to geo-reference historical maps so locations from the past can be found in the present, but the other will have to wait for another day. (But I'd probably start with openheatmap.com when we tackle it again. Any other suggestions would be gratefully received!)