In September I was invited to give a keynote at the Museum Theme Days 2016 in Helsinki. I spoke on 'Reaching out: museums, crowdsourcing and participatory heritage. In lieu of my notes or slides, the video is below. (Great image, thanks YouTube!)

Category: My PhD

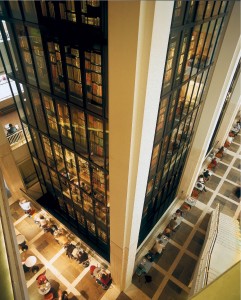

Digital curator at the British Library?!

I have a new job! I'm the newest Digital Curator at the British Library. That link takes you to a post on the BL blog for a bit more about what my job involves. If you've read any of my posts over the past couple of years, you'll know that working to encourage digital scholarship is a pretty good fit for my research and teaching interests.

In other news, I passed my PhD viva! I've got a couple of minor corrections to fit in around work and various papers, and then my PhD is over! (Unless I decide to publish from my thesis, of course…)

Early PhD findings: Exploring historians' resistance to crowdsourced resources

I wrote up some early findings from my PhD research for conferences back in 2012 when I was working on questions around 'but will historians really use resources created by unknown members of the public?'. People keep asking me for copies of my notes (and I've noticed people citing an online video version which isn't ideal) and since they might be useful and any comments would help me write-up the final thesis, I thought I'd be brave and post my notes.

A million caveats apply – these were early findings, my research questions and focus have changed and I've interviewed more historians and reviewed many more participative history projects since then; as a short paper I don't address methods etc; and obviously it's only a huge part of a tiny topic… (If you're interested in crowdsourcing, you might be interested in other writing related to scholarly crowdsourcing and collaboration from my PhD, or my edited volume on 'Crowdsourcing our cultural heritage'.) So, with those health warnings out of the way, here it is. I'd love to hear from you, whether with critiques, suggestions, or just stories about how it relates to your experience. And obviously, if you use this, please cite it!

Exploring historians' resistance to crowdsourced resources

Scholarly crowdsourcing may be seen as a solution to the backlog of historical material to be digitised, but will historians really use resources created by unknown members of the public?

The Transcribe Bentham project describes crowdsourcing as 'the harnessing of online activity to aid in large scale projects that require human cognition' (Terras, 2010a). 'Scholarly crowdsourcing' is a related concept that generally seems to involve the collaborative creation of resources through collection, digitisation or transcription. Crowdsourcing projects often divide up large tasks (like digitising an archive) into smaller, more manageable tasks (like transcribing a name, a line, or a page); this method has helped digitise vast numbers of primary sources.

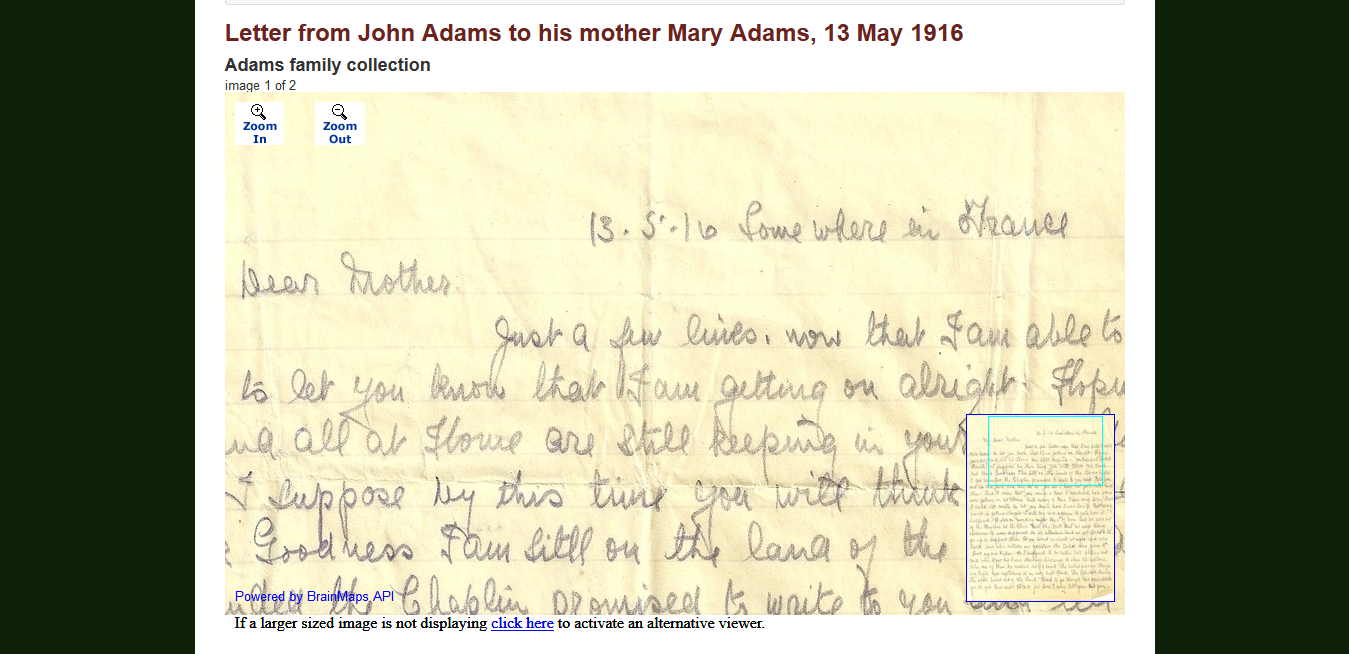

My doctoral research was inspired by a vision of 'participant digitization', a form of scholarly crowdsourcing that seeks to capture the digital records and knowledge generated when researchers access primary materials in order to openly share and re-use them. Unlike many crowdsourcing projects which are designed for tasks performed specifically for the project, participant digitization harnesses the transcription, metadata creation, image capture and other activities already undertaken during research and aggregates them to create re-usable collections of resources.

Research questions and concepts

When Howe clarified his original definition, stating that the 'crucial prerequisite' in crowdsourcing is 'the use of the open call format and the large network of potential laborers', a 'perfect meritocracy' based not on external qualifications but on 'the quality of the work itself', he created a challenge for traditional academic models of authority and credibility (Howe 2006, 2008). Furthermore, how does anonymity or pseudonymity (defined here as often long-standing false names chosen by users of websites) complicate the process of assessing the provenance of information on sites open to contributions from non-academics? An academic might choose to disguise their identity to mask their research activities from competing peers, from a desire to conduct early exploratory work in private or simply because their preferred username was unavailable; but when contributors are not using their real names they cannot derive any authority from their personal or institutional identity. Finally, which technical, social and scholarly contexts would encourage researchers to share (for example) their snippets of transcription created from archival documents, and to use content transcribed by others? What barriers exist to participation in crowdsourcing or prevent the use of crowdsourced content?

Methods

I interviewed academic and family/local historians about how they evaluate, use, and contribute to crowdsourced and traditional resources to investigate how a resource based on 'meritocracy' disrupts current notions of scholarly authority, reliability, trust, and authorship. These interviews aimed to understand current research practices and probe more deeply into how participants assess different types of resources, their feelings about resources created by crowdsourcing, and to discover when and how they would share research data and findings.

I sought historians investigating the same country and time period in order to have a group of participants who faced common issues with the availability and types of primary sources from early modern England. I focused on academic and 'amateur' family or local historians because I was interested in exploring the differences between them to discover which behaviours and attitudes are common to most researchers and which are particular to academics and the pressures of academia.

I recruited participants through personal networks and social media, and conducted interviews in person or on Skype. At the time of writing, 17 participants have been interviewed for up to 2 hours each. It should be noted that these results are of a provisional nature and represent a snapshot of on-going research and analysis.

Early results

I soon discovered that citizen historians are perfect examples of Pro-Ams: 'knowledgeable, educated, committed, and networked' amateurs 'who work to professional standards' (Leadbeater and Miller, 2004; Terras, 2010b).

How do historians assess the quality of resources?

Participants often simply said they drew on their knowledge and experience when sniffing out unreliable documents or statements. When assessing secondary sources, their tacit knowledge of good research and publication practices was evident in common statements like '[I can tell from] it's the way it's written'. They also cited the presence and quality of footnotes, and the depth and accuracy of information as important factors. Transcribed sources introduced another layer of quality assessment – researchers might assess a resource by checking for transcription errors that are often copied from one database to another. Most researchers used multiple sources to verify and document facts found in online or offline sources.

When and how do historians share research data and findings?

It appears that between accessing original records and publishing information, there are several key stages where research data and findings might be shared. Stages include acquiring and transcribing records, producing visualisations like family trees and maps, publishing informal notes and publishing synthesised content or analysis; whether a researcher passes through all the stages depends on their motivation and audience. Information may change formats between stages, and since many claim not to share information that has not yet been sufficiently verified, some information would drop out before each stage. It also appears that in later stages of the research process the size of the potential audience increases and the level of trust required to share with them decreases.

For academics, there may be an additional, post-publication stage when resources are regarded as 'depleted' – once they have published what they need from them, they would be happy to share them. Family historians meanwhile see some value in sharing versions of family trees online, or in posting names of people they are researching to attract others looking for the same names.

Sharing is often negotiated through private channels and personal relationships. Methods of controlling sharing include showing people work in progress on a screen rather than sending it to them and using email in preference to sharing functionality supplied by websites – this targeted, localised sharing allows the researcher to retain a sense of control over early stage data, and so this is one key area where identity matters. Information is often shared progressively, and getting access to more information depends on your behaviour after the initial exchange – for example, crediting the provider in any further use of the data, or reciprocating with good data of your own.

When might historians resist sharing data?

Participants gave a range of reasons for their reluctance to share data. Being able to convey the context of creation and the qualities of the source materials is important for historians who may consider sharing their 'depleted' personal archives – not being able to provide this means they are unlikely to share. Being able to convey information about data reliability is also important. Some information about the reliability of a piece of information is implicitly encoded in its format (for example, in pencil in notebooks versus electronic records), hedging phrases in text, in the number of corroborating sources, or a value judgement about those sources. If it is difficult to convey levels of 'certainty' about reliability when sharing data, it is less likely that people will share it – participants felt a sense of responsibility about not publishing (even informally) information that hasn't been fully verified. This was particularly strong in academics. Some participants confessed to sneaking forbidden photos of archival documents they ran out of time to transcribe in the archive; unsurprisingly it is unlikely they would share those images.

Overall, if historians do not feel they would get information of equal value back in exchange, they seem less likely to share. Professional researchers do not want to give away intellectual property, and feel sharing data online is risky because the protocols of citation and fair use are presently uncertain. Finally, researchers did not always see a point in sharing their data. Family history content was seen as too specific and personal to have value for others; academics may realise the value of their data within their own tightly-defined circles but not realise that their records may have information for other biographical researchers (i.e. people searching by name) or other forms of history.

Which concerns are particular to academic historians?

Reputational risk is an issue for some academics who might otherwise share data. One researcher said: 'we are wary of others trawling through our research looking for errors or inconsistencies. […] Obviously we were trying to get things right, but if we have made mistakes we don't want to have them used against us. In some ways, the less you make available the better!'. Scholarly territoriality can be an issue – if there is another academic working on the same resources, their attitude may affect how much others share. It is also unclear how academic historians would be credited for their work if it was performed under a pseudonym that does not match the name they use in academia.

What may cause crowdsourced resources to be under-used?

In this research, 'amateur' and academic historians shared many of the same concerns for authority, reliability, and trust. The main reported cause of under-use (for all resources) is not providing access to original documents as well as transcriptions. Researchers will use almost any information as pointers or leads to further sources, but they will not publish findings based on that data unless the original documents are available or the source has been peer-reviewed. Checking the transcriptions against the original is seen as 'good practice', part of a sense of responsibility 'to the world's knowledge'.

Overall, the identity of the data creator is less important than expected – for digitised versions of primary sources, reliability is not vested in the identity of the digitiser but in the source itself. Content found on online sites is tested against a set of finely-tuned ideas about the normal range of documents rather than the authority of the digitiser.

Cite as:

References

Howe, J. (undated). Crowdsourcing: A Definition http://crowdsourcing.typepad.com

Howe, J. (2006). Crowdsourcing: A Definition. http://crowdsourcing.typepad.com/cs/2006/06/crowdsourcing_a.html

Howe, J. (2008). Join the crowd: Why do multinationals use amateurs to solve scientific and technical problems? The Independent. http://www.independent.co.uk/life-style/gadgets-and-tech/features/join-the-crowd-why-do-multinationals-use-amateurs-to-solve-scientific-and-technical-problems-915658.html

Leadbeater, C., and Miller, P. (2004). The Pro-Am Revolution: How Enthusiasts Are Changing Our Economy and Society. Demos, London, 2004. http://www.demos.co.uk/files/proamrevolutionfinal.pdf

Terras, M. (2010a) Crowdsourcing cultural heritage: UCL's Transcribe Bentham project. Presented at: Seeing Is Believing: New Technologies For Cultural Heritage. International Society for Knowledge Organization, UCL (University College London). http://eprints.ucl.ac.uk/20157/

Terras, M. (2010b). “Digital Curiosities: Resource Creation via Amateur Digitization.” Literary and Linguistic Computing 25, no. 4 (October 14, 2010): 425–438. http://llc.oxfordjournals.org/cgi/doi/10.1093/llc/fqq019

Request for research participants: academic historians and historical geographers

(For those who don't know me or who mostly know me through digital heritage work, some background to support my call for PhD research participants…)

I am a PhD student in the Department of History at the Open University. I am conducting interviews to understand how online resources have (or have not) altered historians' patterns of work, and I would particularly like to interview academic historians or historical geographers who are researching people and places in British history from the 1600s-1900. I would like to talk to a range of participants, including those who are not clued up on online resources, those who are enthusiastic advocates of all things digital and people at all stages in-between. I would really love to hear from people researching women's histories or the history of science, but I'm interested in any kind of history.

Interviews are carried out on the phone, Skype, or in person if you prefer and can meet within reasonable distance from London or Oxford. Interviews take 40 – 120 minutes and will be carried out in December and January. Many previous participants have said they enjoyed the interview and benefitted from the opportunity to reflect on their work.

To volunteer to take part in an interview, or for more information, please email me at mia.ridge@gmail.com.

For more information visit Information for potential research participants and for background about my PhD visit My PhD research. Please feel free to pass this on to anyone who might be interested in being interviewed.

The ever-morphing PhD

I wrote this for the NEH/Polis Summer Institute on deep mapping back in June but I'm repurposing it as a quick PhD update as I review my call for interview participants. I'm in the middle of interviews at the moment (and if you're an academic historian working on British history 1600-1900 who might be willing to be interviewed I'd love to hear from you) and after that I'll no doubt be taking stock of the research landscape, the findings from my interviews and project analyses, and updating the shape of my project as we go into the new year. So it doesn't quite reflect where I'm at now, but at the very least it's an insight into the difficulties of research into digital history methodologies when everything is changing so quickly:

"Originally I was going to build a tool to support something like crowdsourced deep mapping through a web application that would let people store and geolocate documents and images they were digitising. The questions that are particularly relevant for this workshop are: what happens when crowdsourcing or citizen history meet deep mapping? Can a deep map created by multiple people for their own research purposes support scholarly work? Can a synthetic, ad hoc collection of information be used to support an argument or would it be just for the discovery of spatio-temporarily relevant material? How would a spatial narrative layer work?

I planned to test this by mapping the lives and intellectual networks of early scientific women. But after conducting a big review of related projects I eventually realised that there's too much similar work going on in the field and that inevitably something similar would have been created by someone with more resources by the time I was writing up. So I had to rethink my question and my methods.

So now my PhD research seeks to answer 'how do academic and family/local historians evaluate, use and contribute to crowdsourced resources, especially geo-located historical materials?', with the goal of providing some insight into the impact of digitality on research practices and scholarship in the humanities. … How do trained and self-taught historians cope with changes in place names and boundaries over time, and the many variations and similarities in place names. Does it matter if you've never been to the place and don't know that it might be that messy and complex?

I'm interested how living in a digital culture affects how researchers work. What does it mean to generate as well as consume digital data in the course of research? How does user-created content affect questions of authorship, authority and trust for amateur historians and scholarly practice? What are the characteristics of a well-designed digital resource, and how can resources and tools for researchers be improved? It's a very Human-Computer Interaction/Infomatics view of the digital humanities but it addresses the issues around discoverability and usability that are so important for people building projects.

I'm currently interviewing academic, family and local historians, focusing on those working on research on people or places in early modern England – very loosely defined, as I'll go 1600-1900. I'm asking them about the tools do they currently use in their research; how they assess new resources; if or when they might you use a resource created through crowdsourcing or user contributions? (e.g. Wikipedia or ancestry.com); how do you work out which online records to trust? How they use place names or geographic locations in your research?

So far I've mostly analysed the interviews for how people think about crowdsourcing, I'll be focusing on the responses to place when I get back.

More generally, I'm interested in the idea of 'chorography 2.0' – what would it look like now? The abundance of information is as much of a problem as an opportunity: how to manage that?"

Halfway through 'deep maps and spatial narratives' summer institute

I'm a week and a bit into the NEH Institute for Advanced Topics in the Digital Humanities on 'Spatial Narrative and Deep Maps: Explorations in the Spatial Humanities', so this is a (possibly self-indulgent) post to explain why I'm over in Indianapolis and why I only seem to be tweeting with the #PolisNEH hashtag. We're about to dive into three days of intense prototyping before wrapping things up on Friday, so I'm posting almost as a marker of my thoughts before the process of thinking-through-making makes me re-evaluate our earlier definitions. Stuart Dunn has also blogged more usefully on Deep maps in Indy.

We spent the first week hearing from the co-directors David Bodenhamer (history, IUPUI), John Corrigan (religious studies, Florida State University), and Trevor Harris (geography, West Virginia University) and guest lecturers Ian Gregory (historical GIS and digital humanities, Lancaster University) and May Yuan (geonarratives, University of Oklahoma), and also from selected speakers at the Digital Cultural Mapping: Transformative Scholarship and Teaching in the Geospatial Humanities at UCLA. We also heard about the other participants projects and backgrounds, and tried to define 'deep maps' and 'spatial narratives'.

It's been pointed out that as we're at the 'bleeding edge', visions for deep mapping are still highly personal. As we don't yet have a shared definition I don't want to misrepresent people's ideas by summarising them, so I'm just posting my current definition of deep maps:

A deep map contains geolocated information from multiple sources that convey their source, contingency and context of creation; it is both integrated and queryable through indexes of time and space.

Essential characteristics: it can be a product, whether as a snapshot static map or as layers of interpretation with signposts and pre-set interactions and narrative, but is always visibly a process. It allows open-ended exploration (within the limitations of the data available and the curation processes and research questions behind it) and supports serendipitous discovery of content. It supports curiosity. It supports arguments but allows them to be interrogated through the mapped content. It supports layers of spatial narratives but does not require them. It should be compatible with humanities work: it's citable (e.g. provides URL that shows view used to construct argument) and provides access to its sources, whether as data downloads or citations. It can include different map layers (e.g. historic maps) as well as different data sources. It could be topological as well as cartographic. It must be usable at different scales: e.g. in user interface – when zoomed out provides sense of density of information within; e.g. as space – can deal with different levels of granularity.

Essential functions: it must be queryable and browseable. It must support large, variable, complex, messy, fuzzy, multi-scalar data. It should be able to include entities such as real and imaginary people and events as well as places within spaces. It should support both use for presentation of content and analytic use. It should be compelling – people should want to explore other places, times, relationships or sources. It should be intellectually immersive and support 'flow'.

Looking at it now, the first part is probably pretty close to how I would have defined it at the start, but my thinking about what this actually means in terms of specifications is the result of the conversations over the past week and the experience everyone brings from their own research and projects.

For me, this Institute has been a chance to hang out with ace people with similar interests and different backgrounds – it might mean we spend some time trying to negotiate discipline-specific language but it also makes for a richer experience. It's a chance to work with wonderfully messy humanities data, and to work out how digital tools and interfaces can support ambiguous, subjective, uncertain, imprecise, rich, experiential content alongside the highly structured data GIS systems are good at. It's also a chance to test these ideas by putting them into practice with a dataset on religion in Indianapolis and learn more about deep maps by trying to build one (albeit in three days).

As part of thinking about what I think a deep map is, I found myself going back to an embarrassingly dated post on ideas for location-linked cultural heritage projects:

I've always been fascinated with the idea of making the invisible and intangible layers of history linked to any one location visible again. Millions of lives, ordinary or notable, have been lived in London (and in your city); imagine waiting at your local bus stop and having access to the countless stories and events that happened around you over the centuries. … The nice thing about local data is that there are lots of people making content; the not nice thing about local data is that it's scattered all over the web, in all kinds of formats with all kinds of 'trustability', from museums/libraries/archives, to local councils to local enthusiasts and the occasional raving lunatic. … Location-linked data isn't only about official cultural heritage data; it could be used to display, preserve and commemorate histories that aren't 'notable' or 'historic' enough for recording officially, whether that's grime pirate radio stations in East London high-rise roofs or the sites of Turkish social clubs that are now new apartment buildings. Museums might not generate that data, but we could look at how it fits with user-generated content and with our collecting policies.

Amusingly, four years ago my obsession with 'open sourcing history' was apparently already well-developed and I was asking questions about authority and trust that eventually informed my PhD – questions I hope we can start to answer as we try to make a deep map. Fun!

Finally, my thanks to the NEH and the Institute organisers and the support staff at the Polis Center and IUPUI for the opportunity to attend.

Frequently Asked Questions about crowdsourcing in cultural heritage

Over time I've noticed the repetition of various misconceptions and apprehensions about crowdsourcing for cultural heritage and digital history, so since this is a large part of my PhD topic I thought I'd collect various resources together as I work to answer some FAQs. I'll update this post over time in response to changes in the field, my research and comments from readers. While this is partly based on some writing for my PhD, I've tried not to be too academic and where possible I've gone for publicly accessible sources like blog posts rather than send you to a journal paywall.

If you'd rather watch a video than read, check out the Crowdsourcing Consortium for Libraries and Archives (CCLA)'s 'Crowdsourcing 101: Fundamentals and Case Studies' online seminar.

[Last updated: February 2016, to address 'crowdsourcing steals jobs'. Previous updates added a link to CCLA events, crowdsourcing projects to explore and a post on machine learning+crowdsourcing.]

What is crowdsourcing?

Definitions are tricky. Even Jeff Howe, the author of 'Crowdsourcing' has two definitions:

The White Paper Version: Crowdsourcing is the act of taking a job traditionally performed by a designated agent (usually an employee) and outsourcing it to an undefined, generally large group of people in the form of an open call.

The Soundbyte Version: The application of Open Source principles to fields outside of software.

For many reasons, the term 'crowdsourcing' isn't appropriate for many cultural heritage projects but the term is such neat shorthand that it'll stick until something better comes along. Trevor Owens (@tjowens) has neatly problematised this in The Crowd and The Library:

'Many of the projects that end up falling under the heading of crowdsourcing in libraries, archives and museums have not involved large and massive crowds and they have very little to do with outsourcing labor. … They are about inviting participation from interested and engaged members of the public [and] continue a long standing tradition of volunteerism and involvement of citizens in the creation and continued development of public goods'

Defining crowdsourcing in cultural heritage

To summarise my own thinking and the related literature, I'd define crowdsourcing in cultural heritage as an emerging form of engagement with cultural heritage that contributes towards a shared, significant goal or research area by asking the public to undertake tasks that cannot be done automatically, in an environment where the tasks, goals (or both) provide inherent rewards for participation.

Who is 'the crowd'?

Good question! One tension underlying the 'openness' of the call to participate in cultural heritage is the fact that there's often a difference between the theoretical reach of a project (i.e. everybody) and the practical reach, the subset of 'everybody' with access to the materials needed (like a computer and an internet connection), the skills, experience and time… While 'the crowd' may carry connotations of 'the mob', in 'Digital Curiosities: Resource Creation Via Amateur Digitisation', Melissa Terras (@melissaterras) points out that many 'amateur' content creators are 'extremely self motivated, enthusiastic, and dedicated' and test the boundaries between 'between definitions of amateur and professional, work and hobby, independent and institutional' and quotes Leadbeater and Miller's 'The Pro-Am Revolution' on people who pursue an activity 'as an amateur, mainly for the love of it, but sets a professional standard'.

There's more and more talk of 'community-sourcing' in cultural heritage, and it's a useful distinction but it also masks the fact that nearly all crowdsourcing projects in cultural heritage involve a community rather than a crowd, whether they're the traditional 'enthusiasts' or 'volunteers', citizen historians, engaged audiences, whatever. That said, Amy Sample Ward has a diagram that's quite useful for planning how to work with different groups. It puts the 'crowd' (people you don't know), 'network' (the community of your community) and 'community' (people with a relationship to your organisation) in different rings based on their closeness to you.

'The crowd' is differentiated not just by their relationship to your organisation, or by their skills and abilities, but their motivation for participating is also important – some people participate in crowdsourcing projects for altruistic reasons, others because doing so furthers their own goals.

I'm worried about about crowdsourcing because…

…isn't letting the public in like that just asking for trouble?

@lottebelice said she'd heard people worry that 'people are highly likely to troll and put in bad data/content/etc on purpose' – but this rarely happens. People worried about this with user-generated content, too, and while kids in galleries delight in leaving rude messages about each other, it's rare online.

It's much more likely that people will mistakenly add bad data, but a good crowdsourcing project should build any necessary data validation into the project. Besides, there are generally much more interesting places to troll than a cultural heritage site.

And as Matt Popke pointed out in a comment, 'When you have thousands of people contributing to an entry you have that many more pairs of eyes watching it. It's like having several hundred editors and fact-checkers. Not all of them are experts, but not all of them have to be. The crowd is effectively self-policing because when someone trolls an entry, somebody else is sure to notice it, and they're just as likely to fix it or report the issue'. If you're really worried about this, an earlier post on Designing for participatory projects: emergent best practice' has some other tips.

…doesn't crowdsourcing take advantage of people?

Sadly, yes, some of the activities that are labelled 'crowdsourcing' do. Design competitions that expect lots of people to produce full designs and pay a pittance (if anything) to the winner are rightly hated. (See antispec.com for more and a good list of links).

But in cultural heritage, no. Museums, galleries, libraries, archives and academic projects are in the fortunate position of having interesting work that involves an element of social good, and they also have hugely varied work, from microtasks to co-curated research projects. Crowdsourcing is part of a long tradition of volunteering and altruistic participation, and to quote Owens again, 'Crowdsourcing is a concept that was invented and defined in the business world and it is important that we recast it and think through what changes when we bring it into cultural heritage.'

[Update, May 2013: it turns out museums aren't immune from the dangers of design competitions and spec work: I've written On the trickiness of crowdsourcing competitions to draw some lessons from the Sydney Design competition kerfuffle.]

Anyway, crowdsourcing won't usually work if it's not done right. From A Crowd Without Community – Be Wary of the Mob:

"when you treat a crowd as disposable and anonymous, you prevent them from achieving their maximum ability. Disposable crowds create disposable output. Simply put: crowds need a sense of identity and community to achieve their potential."

…crowdsourcing can't be used for academic work

Reasons given include 'humanists don't like to share their knowledge' with just anyone. And it's possible that they don't, but as projects like Transcribe Bentham and Trove show, academics and other researchers will share the work that helps produce that knowledge. (This is also something I'm examining in my PhD. I'll post some early findings after the Digital Humanities 2012 conference in July).

Looking beyond transcription and other forms of digitisation, it's worth checking out Prism, 'a digital tool for generating crowd-sourced interpretations of texts'.

…it steals jobs

Once upon a time, people starting a career in academia or cultural heritage could get jobs as digitisation assistants, or they could work on a scholarly edition. Sadly, that's not the case now, but that's probably more to do with year upon year of funding cuts. Blame the bankers, not the crowdsourcers.

The good news? Crowdsourcing projects can create jobs – participatory projects need someone to act as community liaison, to write the updates that demonstrate the impact of crowdsourced contributions, to explain the research value of the project, to help people integrate it into teaching, to organise challenges and editathons and more.

What isn't crowdsourcing?

…'the wisdom of the crowds'?

Which is not just another way of saying 'crowd psychology', either (another common furphy). As Wikipedia puts it, 'the wisdom of the crowds' is based on 'diverse collections of independently-deciding individuals'. Handily, Trevor Owens has just written a post addressing the topic: Human Computation and Wisdom of Crowds in Cultural Heritage.

…user-generated content

So what's the difference between crowdsourcing and user-generated content? The lines are blurry, but crowdsourcing is inherently productive – the point is to get a job done, whether that's identifying people or things, creating content or digitising material.

Conversely, the value of user-generated content lies in the act of creating it rather than in the content itself – for example, museums might value the engagement in a visitor thinking about a subject or object and forming a response to it in order to comment on it. Once posted it might be displayed as a comment or counted as a statistic somewhere but usually that's as far as it goes.

And @sherah1918 pointed out, there's a difference between asking for assistance with tasks and asking for feedback or comments: 'A comment book or a blog w/comments isn't crowdsourcing to me … nor is asking ppl to share a story on a web form. That is a diff appr to collecting & saving personal histories, oral histories'.

…other things that aren't crowdsourcing:

[Heading inspired by Sheila Brennan @sherah1918]

- Crowdfunding (it's often just asking for micro-donations, though it seems that successful crowdfunding projects have a significant public engagement component, which brings them closer to the concerns of cultural heritage organisations. It's also not that new. See Seventeenth-century crowd funding for one example.)

- Data-mining social media and other content (though I've heard this called 'passive' or 'implict' crowdsourcing)

- Human computation (though it might be combined with crowdsourcing)

- Collective intelligence (though it might also be combined with crowdsourcing)

- General calls for content, help or participation (see 'user-generated content') or vaguely asking people what they think about an idea. Asking for feedback is not crowdsourcing. Asking for help with your homework isn't crowdsourcing, as it only benefits you.

- Buzzwords applied to marketing online. And as @emmclean said, "I think many (esp mkting) see "crowdsourcing" as they do "viral" – just happens if you throw money at it. NO!!! Must be great idea" – it must make sense as a crowdsourced task.

Ok, so what's different about crowdsourcing in cultural heritage?

For a start, the process is as valuable as the result. Owens has a great post on this, Crowdsourcing Cultural Heritage: The Objectives Are Upside Down, where he says:

'The process of crowdsourcing projects fulfills the mission of digital collections better than the resulting searches… Far better than being an instrument for generating data that we can use to get our collections more used it is actually the single greatest advancement in getting people using and interacting with our collections. … At its best, crowdsourcing is not about getting someone to do work for you, it is about offering your users the opportunity to participate in public memory … it is about providing meaningful ways for the public to enhance collections while more deeply engaging and exploring them'.

And as I've said elsewhere, ' playing [crowdsourcing] games with museum objects can create deeper engagement with collections while providing fun experiences for a range of audiences'. (For definitions of 'engagement' see The Culture and Sport Evidence (CASE) programme. (2011). Evidence of what works: evaluated projects to drive up engagement (PDF).)

What about cultural heritage and citizen science?

[This was written in 2012. I've kept it for historical reasons but think differently now.]

First, another definition. As Fiona Romeo writes, 'Citizen science projects use the time, abilities and energies of a distributed community of amateurs to analyse scientific data. In doing so, such projects further both science itself and the public understanding of science'. As Romeo points out in a different post, 'All citizen science projects start with well-defined tasks that answer a real research question', while citizen history projects rarely if ever seem to be based around specific research questions but are aimed more generally at providing data for exploration. Process vs product?

I'm still thinking through the differences between citizen science and citizen history, particularly where they meet in historical projects like Old Weather. Both citizen science and citizen history achieve some sort of engagement with the mindset and work of the equivalent professional occupations, but are the traditional differences between scientific and humanistic enquiry apparent in crowdsourcing projects? Are tools developed for citizen science suitable for citizen history? Does it make a difference that it's easier to take a new interest in history further without a big investment in learning and access to equipment?

I have a feeling that 'citizen science' projects are often more focused on the production of data as accurately and efficiently as possible, and 'citizen history' projects end up being as much about engaging people with the content as it is about content production. But I'm very open to challenges on this…

What kind of cultural heritage stuff can be crowdsourced?

I wrote this list of 'Activity types and data generated' over a year ago for my Masters dissertation on crowdsourcing games for museums and a subsequent paper for Museums and the Web 2011, Playing with Difficult Objects – Game Designs to Improve Museum Collections (which also lists validation types and requirements). This version should be read in the light of discussion about the difference between crowdsourcing and user-generated content and in the context of things people can do with museums and with games, but it'll do for now:

| Activity | Data generated |

| Tagging (e.g. steve.museum, Brooklyn Museum Tag! You're It; variations include two-player 'tag agreement' games like Waisda?, extensions such as guessing games e.g. GWAP ESP Game, Verbosity, Tiltfactor Guess What?; structured tagging/categorisation e.g. GWAP Verbosity, Tiltfactor Cattegory) | Tags; folksonomies; multilingual term equivalents; structured tags (e.g. 'looks like', 'is used for', 'is a type of'). |

| Debunking (e.g. flagging content for review and/or researching and providing corrections). | Flagged dubious content; corrected data. |

| Recording a personal story | Oral histories; contextualising detail; eyewitness accounts. |

| Linking (e.g. linking objects with other objects, objects to subject authorities, objects to related media or websites; e.g. MMG Donald). | Relationship data; contextualising detail; information on history, workings and use of objects; illustrative examples. |

| Stating preferences (e.g. choosing between two objects e.g. GWAP Matchin; voting on or 'liking' content). | Preference data; subsets of 'highlight' objects; 'interestingness' values for content or objects for different audiences. May also provide information on reason for choice. |

| Categorising (e.g. applying structured labels to a group of objects, collecting sets of objects or guessing the label for or relationship between presented set of objects). | Relationship data; preference data; insight into audience mental models; group labels. |

| Creative responses (e.g. write an interesting fake history for a known object or purpose of a mystery object.) | Relevance; interestingness; ability to act as social object; insight into common misconceptions. |

You can also divide crowdsourcing projects into 'macro' and 'micro' tasks – giving people a goal and letting them solve it as they prefer, vs small, well-defined pieces of work, as in the 'Umbrella of Crowdsourcing' at The Daily Crowdsource and there's a fair bit of academic literature on other ways of categorising and describing crowdsourcing.

Using crowdsourcing to manage crowdsourcing

There's also a growing body of literature on ecosystems of crowdsourcing activities, where different tasks and platforms target different stages of the process. A great example is Brooklyn Museum’s ‘Freeze Tag!’, a game that cleans up data added in their tagging game. An ecosystem of linked activities (or games) can maximise the benefits of a diverse audience by providing a range of activities designed for different types of participant skills, knowledge, experience and motivations; and can encompass different levels of participation from liking, to tagging, finding facts and links.

A participatory ecosystem can also resolve some of the difficulties around validating specialist tags or long-form, more subjective content by circulating content between activities for validation and ranking for correctness, 'interestingness' (etc) by other players (see for example the 'Contributed data lifecycle' diagram on my MW2011 paper or the 'Digital Content Life Cycle' for crowdsourcing in Oomen and Aroyo's paper below). As Nina Simon said in The Participatory Museum, 'By making it easy to create content but impossible to sort or prioritize it, many cultural institutions end up with what they fear most: a jumbled mass of low-quality content'. Crowdsourcing the improvement of cultural heritage data would also make possible non-crowdsourcing engagement projects that need better content to be viable.

See also Raddick, MJ, and Georgia Bracey. 2009. “Citizen Science: Status and Research Directions for the Coming Decade” on bridging between old and new citizen science projects to aid volunteer retention, and Nov, Oded, Ofer Arazy, and David Anderson. 2011. “Dusting for Science: Motivation and Participation of Digital Citizen Science Volunteers” on creating 'dynamic contribution environments that allow volunteers to start contributing at lower-level granularity tasks, and gradually progress to more demanding tasks and responsibilities'.

What does the future of crowdsourcing hold?

Platforms aimed at bootstrapping projects – that is, getting new projects up and running as quickly and as painlessly as possible – seem to be the next big thing. Designing tasks and interfaces suitable for mobile and tablets will allow even more of us to help out while killing time. There's also a lot of work on the integration of machine learning and human computation; my post 'Helping us fly? Machine learning and crowdsourcing' has more on this.

Find out how crowdsourcing in cultural heritage works by exploring projects

Spend a few minutes with some of the projects listed in Looking for (crowdsourcing) love in all the right places to really understand how and why people participate in cultural heritage crowdsourcing.

Where can I find out more? (AKA, a reading list in disguise)

- Read the original article about crowdsourcing, published in the June, 2006 issue of Wired Magazine or Jeff Howe's book, Crowdsourcing: why the power of the crowd is driving the future of business.

- My MW2011 paper, Playing with Difficult Objects – Game Designs to Improve Museum Collections and (deep breath) I've posted my MSc dissertation 'Playing with difficult objects: game designs for crowdsourcing museum metadata' online

- From tagging to theorizing: deepening engagement with cultural heritage through crowdsourcing. Curator: The Museum Journal, 56(4) pp. 435–450 (Mia Ridge; link is to university repository version for those who don't have access to Curator)

- Workshop activities and notes for 'Crowdsourcing in Libraries, Museums and Cultural Heritage Institutions' (for the British Library's Digital Scholarship programme)

- Trevor Owens' blog on 'User Centered Digital History'

- Rose Holley's (of Trove fame) blog

- @benwbrum's Collaborative Manuscript Transcription blog

- Peer-to-peer foundation crowdsourcing page

- steve.museum reports – the first museum tagging project

- Why Crowdsourcing? Why Scripto?

- Clay Shirky, Cognitive Surplus: Creativity and Generosity in a Connected Age

- Crowdsourcing in the Cultural Heritage Domain: Opportunities and Challenges, by Johan Oomen and Lora Aroyo (PDF).

- Bringing Citizen Scientists and Historians Together

- Final report on 'crowd-sourcing in the humanities' of the AHRC Crowd Sourcing Study

- Accessible resources on doing crowdsourcing well: try How to Effectively Use Incentives in your Crowdsourcing Project, The Magic of Participation, Building and Maintaining a Vibrant, Creative Crowd.

- If you're into mapping, geography or just geospatial work, check out volunteered geographic information (VGI).

- Crowdfunding Culture: Namaste, and Welcome to the Smithsonian lists a hit (yoga, a popular topic) and a miss in crowdfunding at the Smithsonian. You might also find CrowdFunding Website Reviews useful.

There's a lot of academic literature on all kinds of aspects of crowdsourcing, but I've gone for sources that are accessible both intellectually and in terms of licensing. If a key reference isn't there, it might be because I can't find a pre-print or whatever outside a paywall – let me know if you know of one!

Liked this post? Buy the book! 'Crowdsourcing Our Cultural Heritage' is available through Ashgate or your favourite bookseller…

Liked this post? Buy the book! 'Crowdsourcing Our Cultural Heritage' is available through Ashgate or your favourite bookseller…

Thanks, and over to you!

Thanks to everyone who responded to my call for their favourite 'misconceptions and apprehensions about crowdsourcing (esp in history and cultural heritage)', and to those who inspired this post in the first place by asking questions in various places about the negative side of crowdsourcing. I'll update the post as I hear of more, so let me know your favourites. I'll also keep adding links and resources as I hear of them.

You might also be interested in: Notes from 'Crowdsourcing in the Arts and Humanities' and various crowdsourcing classes and workshops I've run over the past few years.

Notes on current issues in Digital Humanities

In July 2011, the Open University held a colloquium called ‘Digital technologies: help or hindrance for the humanities?’, in part to celebrate the launch of the Thematic Research Network for Digital Humanities at the OU. A full multi-author report about the colloquium (titled 'Colloquium: Digital Technologies: Help or Hindrance for the Humanities?') will be coming out in the 'Digital Futures Special Issue Arts and Humanities in HE' edition of Arts and Humanities in Higher Education soon, but a workshop was also held at the OU's Milton Keynes campus on Thursday to discuss some of the key ideas that came from the colloquium and to consider the agenda for the thematic research network. I was invited to present in the workshop, and I've shared my notes and some comments below (though of course the spoken version varied slightly).

To help focus the presentations, Professor John Wolffe (who was chairing) suggested we address the following points:

- What, for you, were the two most important insights arising from last July’s colloquium?

- What should be the two key priorities for the OU’s DH thematic research network over the next year, and why?

Introduction – who I am as context for how I saw the colloquium

Before I started my PhD, I was a digital practitioner – a programmer, analyst, bearer of Zeitgeisty made-up modern job titles – situated in an online community of technologists loosely based in academia, broadcasting, libraries, archives, and particularly, in public history and museums. That's really only interesting in the context of this workshop because my digital community is constituted by the very things that challenge traditional academia – ad hoc collaboration, open data, publicly sharing and debating thoughts in progress.

For people who happily swim in this sea, it's hard to realise how new and scary it can be, but just yesterday I was reminded how challenging the idea of a public identity on social media is for some academics, let alone the thought of finding time to learn and understand yet another tool. As a humanist-turned-technologist-turned-humanist, I have sympathy for the perspective of both worlds.

The two most important insights arising from last July’s colloquium?

John Corrigan's introduction made it clear that the answer to the question 'what is digital humanities' is still very open, and has perhaps as many different answers as there are humanists. That's both exciting and challenging – it leaves room for the adaptation (and adoption) of DH by different humanities disciplines, but it also makes it difficult to develop a shared language for collaboration, for critiquing and peer reviewing DH projects and outputs… [I've also been wondering whether 'digital humanities' would eventually devolve into the practices of disciplines – digital history, etc – and how much digital humanities really works across different humanities disciplines in a meaningful way, but that's a question for another day.]

In my notes, it was the discussion around Chris Bissel's paper on 'Reality and authenticity', Google Earth and archaeology that also stood out – the questions about what's lost and gained in the digital context are important, but, as a technologist, I ask us to be wary of false dichotomies. There's a danger in conflating the materiality of a resource, the seductive aura of an original document, the difficulties in accessing it, in getting past the gatekeepers, with the quality of the time spent with it; with the intrinsic complexity of access, context, interpretation… The sometimes difficult physical journey to an archive, or the smell of old books is not the same as earned access to knowledge.

What should be the two key priorities for the OU’s DH thematic research network over the next year?

[I don't think I did a very good job answering this, perhaps because I still feel too new to know what's already going on and what could be added. Also, I'm apparently unable to limit myself to two.]

I tend to believe that the digital humanities will eventually become normalised as just part of how humanities work, but we need to be careful about how that actually happens.

The early adopters have blazed their trails and lit the way, but in their wake, they've left the non-early adopters – the ordinary humanist – blinking and wondering how to thrive in this new world. I have a sense that digital humanities is established enough, or at least the impact of digitisation projects has been broad enough, that the average humanist is expected to take on the methods of the digital humanist in their grant and research proposals and in their teaching – but has the ordinary humanist been equipped with the skills and training and the access to technologists and collaborators to thrive? Do we need to give everyone access to DH101?

We need to deal with the challenges of interdisciplinary collaboration, particularly publication models, peer review and the inescapable REF. We need to understand how to judge the processes as well as the products of research projects, and to find better ways to recognise new forms of publication, particularly as technology is also disrupting the publication models that early career researchers used to rely on to get started.

Much of the critique of digital working was about what it let people get away with, or how it risks misleading the innocent researcher. As with anything on a screen, there's an illusion of accuracy, completeness, neatness. We need shared practices to critique visualisations and discuss what's really available in database searches, the representativeness of digital repositories, the quality of transcriptions and metadata, the context in which data was created and knowledge produced… Translating the slipperiness of humanities data and research questions into a digital world is a juicy challenge but it's necessary if the potential of DH is to be exploited, whether by humanities scholars or the wider public who have new access to humanities content. 'natural order of things'.

Digitality is no excuse to let students (or other researchers) get away with sloppy practice. The ability to search across millions of records is important, but you should treat the documents you find as rigorously as you'd treat something uncovered deep in the archives. Slow, deep reading, considering the pages or documents adjacent to the one that interests you, the serendipitous find – these are all still important. But we also need to help scholars find ways to cope with the sheer volume of data now available and the probably unrealistic expectations of complete coverage of all potential sources this may create. So my other key priority is working out and teaching the scholarly practices we need to ensure we survive the transition from traditional to digital humanities.

In conclusion, the same issues – trust, authority, the context of knowledge production – are important for my digital and my humanities communities, but these concepts are expressed very differently in each. We need to work together to build bridges between the practices of traditional academia and those of the digital humanities.

Quick PhD update from InterFace 2011

It feels like ages since I've posted, so since I've had to put together a 2 minute lightning talk for the Interface 2011 conference at UCL (for people working in the intersection of humanities and technology), I thought I'd post it here as an update. I'm a few months into the PhD but am still very much working out the details of the shape of my project and I expect that how my core questions around crowdsourcing, digitisation, geolocation, researchers and historical materials fit together will change as I get further into my research. [Basically I'm acknowledging that I may look back at this and cringe.]

Notes for 2 minute lightning talk, Interface 2011

'Crowdsourcing the geolocation of historical materials through participant digitisation'

Hi, I'm Mia, I'm working on a PhD in Digital Humanities in the History department at the Open University.

I'm working on issues around crowdsourcing the digitisation and geolocation of historical materials. I'm looking at 'participant digitisation' so I'll be conducting research and building tools to support various types of researchers in digitising, transcribing and geolocating primary and secondary sources.

I'll also create a spatial interface that brings together the digitised content from all participant digitisers. The interface will support the management of sources based on what I've learned about how historians evaluate potential sources.

The overall process has three main stages: research and observation that leads to iterative cycles of designing, building and testing the interfaces, and finally evaluation and analysis on the tools and the impact of geolocated (ad hoc) collections on the practice of historical research.

My PhD proposal (Provisional title: Participatory digitisation of spatially indexed historical data)

[Update: I'm working on a shorter version with fewer long words. Something like crowdsourcing geolocated historial materials/artefacts with specialist users/academic contributors/citizen historians.]

A few people have asked me about my PhD* topic, and while I was going to wait until I'd started and had a chance to review it in light of the things I'm already starting to learn about what else is going on in the field, I figured I should take advantage of having some pre-written material to cover the gap in blogging while I try to finish various things (like, um, my MSc dissertation) that were hijacked by a broken wrist. So, to keep you entertained in the meantime, here it is.

Please bear in mind that it's already out-of-date in terms of my thinking and sense of what's already happening in the field – I'm really looking forward to diving into it but my plan to spend some time thinking about the project before I started has been derailed by what felt like a year of having an arm in a cast.

* I never got around to posting about this because my disastrous slip on the ice happened just two days after I resigned, but I'm leaving my job at the Science Museum to take up the offer of a full-time PhD in Digital Humanities at the Open University in mid-March.

Provisional title: Participatory digitisation of spatially indexed historical data

This project aims to investigate 'participatory digitisation' models for geo-located historical material.

This project begins with the assumption that researchers are already digitising and geo-locating materials and asks whether it is possible to create systems to capture and share this data. Could the digital records and knowledge generated when researchers access primary materials be captured at the point of creation and published for future re-use? Could the links between materials, and between materials and locations, created when researchers use aggregated or mass-digitised resources, be 'mined' for re-use?

Through the use of a case study based around discovering, collating, transforming and publishing geo-located resources related to early scientific women, the project aims to discover:

- how geo-located materials are currently used and understood by researchers,

- what types of tools can be designed to encourage researchers to share records digitised for their own personal use

- whether tools can be designed to allow non-geospatial specialists to accurately record and discover geo-spatial references

- the viability of using online geo-coding and text mining services on existing digitised resources

Possible outcomes include an evaluation of spatially-oriented approaches to digital heritage resource discovery and use; mental models of geographical concepts in relation to different types of historical material and research methods; contributions to research on crowdsourcing digital heritage resources (particularly the tensions between competition and co-operation, between the urge to hoard or share resources) and prototype interfaces or applications based on the case study.

The project also provides opportunities to reflect on what it means to generate as well as consume digital data in the course of research, and on the changes digital opportunities have created for the arts and humanities researcher.

** This case study is informed by my thinking around the possibilities of re-populating the landscape with references to the lives, events, objects, etc, held by museums and other cultural heritage institutions, e.g. outside museum walls and by an experimental, collaborative project around 'modern bluestockings', that aimed to locate and re-display the forgotten stories around unconventional and pioneering women in science, technology and academia.