Some time ago I wrote a chapter on 'Crowdsourcing in cultural heritage: a practical guide to designing and running successful projects' for the Routledge International Handbook of Research Methods in Digital Humanities, edited by Kristen Schuster and Stuart Dunn. As their blurb says, the volume 'draws on both traditional and emerging fields of study to consider what a grounded definition of quantitative and qualitative research in the Digital Humanities (DH) might mean; which areas DH can fruitfully draw on in order to foster and develop that understanding; where we can see those methods applied; and what the future directions of research methods in Digital Humanities might look like'.

Inspired by a post from the authors of a chapter in the same volume (Opening the ‘black box’ of digital cultural heritage processes: feminist digital humanities and critical heritage studies by Hannah Smyth, Julianne Nyhan & Andrew Flinn), I'm sharing something about what I wanted to do in my chapter.

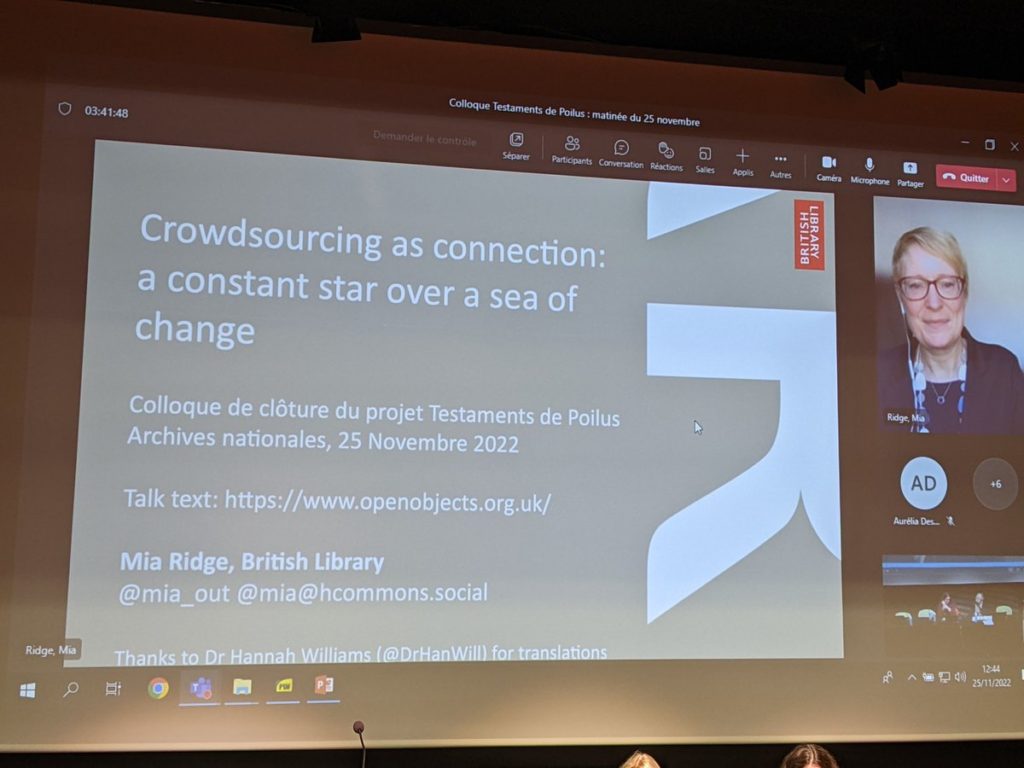

As the title suggests, I wanted to provide practical insights for cultural heritage and digital humanities practitioners. Writing for a Handbook of Research Methods in Digital Humanities was an opportunity help researchers understand both how to apply the 'method' and how the 'behind the scenes' work affects the outcomes. As a method, crowdsourcing in cultural heritage touches on many more methods and disciplines. The chapter built on my doctoral research, and my ideas were roadtested at many workshops, classes and conferences.

Rather than crib from my introduction (which you can read in a pre-edited version online), I've included the headings from the chapter as a guide to the contents:

- An introduction to crowdsourcing in cultural heritage

- Key conceptual and research frameworks

- Fundamental concepts in cultural heritage crowdsourcing

- Why do cultural heritage institutions support crowdsourcing projects?

- Why do people contribute to crowdsourcing projects?

- Turning crowdsourcing ideas into reality

- Planning crowdsourcing projects

- Defining 'success' for your project

- Managing organisational impact

- Choosing source collections

- Planning workflows and data re-use

- Planning communications and participant recruitment

- Final considerations: practical and ethical ‘reality checks’

- Developing and testing crowdsourcing projects

- Designing the ‘onboarding’ experience

- Task design

- Documentation and tutorials

- Quality control: validation and verification systems

- Rewards and recognition

- Running crowdsourcing projects

- Launching a project

- The role of participant discussion

- Ongoing community engagement

- Planning a graceful exit

- The future of crowdsourcing in cultural heritage

- Thanks and acknowledgements

I wrote in the open on this Google Doc: 'Crowdsourcing in cultural heritage: a practical guide to designing and running successful projects', and benefited from the feedback I got during that process, so this post is also an opportunity to highlight and reiterate my 'Thanks and acknowledgements' section:

I would like to thank participants and supporters of crowdsourcing projects I’ve created, including Museum Metadata Games, In their own words: collecting experiences of the First World War, and In the Spotlight. I would also like to thank my co-organisers and attendees at the Digital Humanities 2016 Expert Workshop on the future of crowdsourcing. Especial thanks to the participants in courses and workshops on ‘crowdsourcing in cultural heritage’, including the British Library’s Digital Scholarship training programme, the HILT Digital Humanities summer school (once with Ben Brumfield) and scholars at other events where the course was held, whose insights, cynicism and questions have informed my thinking over the years. Finally, thanks to Meghan Ferriter and Victoria Van Hyning for their comments on this manuscript.

References for Crowdsourcing in cultural heritage: a practical guide to designing and running successful projects

Alam, S. L., & Campbell, J. (2017). Temporal Motivations of Volunteers to Participate in Cultural Crowdsourcing Work. Information Systems Research. https://doi.org/10.1287/isre.2017.0719

Bedford, A. (2014, February 16). Instructional Overlays and Coach Marks for Mobile Apps. Retrieved 12 September 2014, from Nielsen Norman Group website: http://www.nngroup.com/articles/mobile-instructional-overlay/

Berglund Prytz, Y. (2013, June 24). The Oxford Community Collection Model. Retrieved 22 October 2018, from RunCoCo website: http://blogs.it.ox.ac.uk/runcoco/2013/06/24/the-oxford-community-collection-model/

Bernstein, S. (2014). Crowdsourcing in Brooklyn. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Bitgood, S. (2010). An attention-value model of museum visitors (pp. 1–29). Retrieved from Center for the Advancement of Informal Science Education website: http://caise.insci.org/uploads/docs/VSA_Bitgood.pdf

Bonney, R., Ballard, H., Jordan, R., McCallie, E., Phillips, T., Shirk, J., & Wilderman, C. C. (2009). Public Participation in Scientific Research: Defining the Field and Assessing Its Potential for Informal Science Education. A CAISE Inquiry Group Report (pp. 1–58). Retrieved from Center for Advancement of Informal Science Education (CAISE) website: http://caise.insci.org/uploads/docs/PPSR%20report%20FINAL.pdf

Brohan, P. (2012, July 23). One million, six hundred thousand new observations. Retrieved 30 October 2012, from Old Weather Blog website: http://blog.oldweather.org/2012/07/23/one-million-six-hundred-thousand-new-observations/

Brohan, P. (2014, August 18). In search of lost weather. Retrieved 5 September 2014, from Old Weather Blog website: http://blog.oldweather.org/2014/08/18/in-search-of-lost-weather/

Brumfield, B. W. (2012a, March 5). Quality Control for Crowdsourced Transcription. Retrieved 9 October 2013, from Collaborative Manuscript Transcription website: http://manuscripttranscription.blogspot.co.uk/2012/03/quality-control-for-crowdsourced.html

Brumfield, B. W. (2012b, March 17). Crowdsourcing at IMLS WebWise 2012. Retrieved 8 September 2014, from Collaborative Manuscript Transcription website: http://manuscripttranscription.blogspot.com.au/2012/03/crowdsourcing-at-imls-webwise-2012.html

Budiu, R. (2014, March 2). Login Walls Stop Users in Their Tracks. Retrieved 7 March 2014, from Nielsen Norman Group website: http://www.nngroup.com/articles/login-walls/

Causer, T., & Terras, M. (2014). ‘Many Hands Make Light Work. Many Hands Together Make Merry Work’: Transcribe Bentham and Crowdsourcing Manuscript Collections. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Causer, T., & Wallace, V. (2012). Building A Volunteer Community: Results and Findings from Transcribe Bentham. Digital Humanities Quarterly, 6(2). Retrieved from http://www.digitalhumanities.org/dhq/vol/6/2/000125/000125.html

Cheng, J., Teevan, J., Iqbal, S. T., & Bernstein, M. S. (2015, April). Break It Down: A Comparison of Macro- and Microtasks. 4061–4064. https://doi.org/10.1145/2702123.2702146

Clary, E. G., Snyder, M., Ridge, R. D., Copeland, J., Stukas, A. A., Haugen, J., & Miene, P. (1998). Understanding and assessing the motivations of volunteers: A functional approach. Journal of Personality and Social Psychology, 74(6), 1516–30.

Collings, R. (2014, May 5). The art of computer image recognition. Retrieved 25 May 2014, from The Public Catalogue Foundation website: http://www.thepcf.org.uk/what_we_do/48/reference/862

Collings, R. (2015, February 1). The art of computer recognition. Retrieved 22 October 2018, from Art UK website: https://artuk.org/about/blog/the-art-of-computer-recognition

Crowdsourcing Consortium. (2015). Engaging the Public: Best Practices for Crowdsourcing Across the Disciplines. Retrieved from http://crowdconsortium.org/

Crowley, E. J., & Zisserman, A. (2016). The Art of Detection. Presented at the Workshop on Computer Vision for Art Analysis, ECCV. Retrieved from https://www.robots.ox.ac.uk/~vgg/publications/2016/Crowley16/crowley16.pdf

Csikszentmihalyi, M., & Hermanson, K. (1995). Intrinsic Motivation in Museums: Why Does One Want to Learn? In J. Falk & L. D. Dierking (Eds.), Public institutions for personal learning: Establishing a research agenda (pp. 66–77). Washington D.C.: American Association of Museums.

Dafis, L. L., Hughes, L. M., & James, R. (2014). What’s Welsh for ‘Crowdsourcing’? Citizen Science and Community Engagement at the National Library of Wales. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Das Gupta, V., Rooney, N., & Schreibman, S. (n.d.). Notes from the Transcription Desk: Modes of engagement between the community and the resource of the Letters of 1916. Digital Humanities 2016: Conference Abstracts. Presented at the Digital Humanities 2016, Kraków. Retrieved from http://dh2016.adho.org/abstracts/228

De Benetti, T. (2011, June 16). The secrets of Digitalkoot: Lessons learned crowdsourcing data entry to 50,000 people (for free). Retrieved 9 January 2012, from Microtask website: http://blog.microtask.com/2011/06/the-secrets-of-digitalkoot-lessons-learned-crowdsourcing-data-entry-to-50000-people-for-free/

de Boer, V., Hildebrand, M., Aroyo, L., De Leenheer, P., Dijkshoorn, C., Tesfa, B., & Schreiber, G. (2012). Nichesourcing: Harnessing the power of crowds of experts. Proceedings of the 18th International Conference on Knowledge Engineering and Knowledge Management, EKAW 2012, 16–20. Retrieved from http://dx.doi.org/10.1007/978-3-642-33876-2_3

DH2016 Expert Workshop. (2016, July 12). DH2016 Crowdsourcing workshop session overview. Retrieved 5 October 2018, from DH2016 Expert Workshop: Beyond The Basics: What Next For Crowdsourcing? website: https://docs.google.com/document/d/1sTII8P67mOFKWxCaAKd8SeF56PzKcklxG7KDfCRUF-8/edit?usp=drive_open&ouid=0&usp=embed_facebook

Dillon-Scott, P. (2011, March 31). How Europeana, crowdsourcing & wiki principles are preserving European history. Retrieved 15 February 2015, from The Sociable website: http://sociable.co/business/how-europeana-crowdsourcing-wiki-principles-are-preserving-european-history/

DiMeo, M. (2014, February 3). First Monday Library Chat: University of Iowa’s DIY History. Retrieved 7 September 2014, from The Recipes Project website: http://recipes.hypotheses.org/3216

Dunn, S., & Hedges, M. (2012). Crowd-Sourcing Scoping Study: Engaging the Crowd with Humanities Research (p. 56). Retrieved from King’s College website: http://www.humanitiescrowds.org

Dunn, S., & Hedges, M. (2013). Crowd-sourcing as a Component of Humanities Research Infrastructures. International Journal of Humanities and Arts Computing, 7(1–2), 147–169. https://doi.org/10.3366/ijhac.2013.0086

Durkin, P. (2017, September 28). Release notes: A big antedating for white lie – and introducing Shakespeare’s world. Retrieved 29 September 2017, from Oxford English Dictionary website: http://public.oed.com/the-oed-today/recent-updates-to-the-oed/september-2017-update/release-notes-white-lie-and-shakespeares-world/

Eccles, K., & Greg, A. (2014). Your Paintings Tagger: Crowdsourcing Descriptive Metadata for a National Virtual Collection. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Edwards, D., & Graham, M. (2006). Museum volunteers and heritage sectors. Australian Journal on Volunteering, 11(1), 19–27.

European Citizen Science Association. (2015). 10 Principles of Citizen Science. Retrieved from https://ecsa.citizen-science.net/sites/default/files/ecsa_ten_principles_of_citizen_science.pdf

Eveleigh, A., Jennett, C., Blandford, A., Brohan, P., & Cox, A. L. (2014). Designing for dabblers and deterring drop-outs in citizen science. 2985–2994. https://doi.org/10.1145/2556288.2557262

Eveleigh, A., Jennett, C., Lynn, S., & Cox, A. L. (2013). I want to be a captain! I want to be a captain!: Gamification in the old weather citizen science project. Proceedings of the First International Conference on Gameful Design, Research, and Applications, 79–82. Retrieved from http://dl.acm.org/citation.cfm?id=2583019

Ferriter, M., Rosenfeld, C., Boomer, D., Burgess, C., Leachman, S., Leachman, V., … Shuler, M. E. (2016). We learn together: Crowdsourcing as practice and method in the Smithsonian Transcription Center. Collections, 12(2), 207–225. https://doi.org/10.1177/155019061601200213

Fleet, C., Kowal, K., & Přidal, P. (2012). Georeferencer: Crowdsourced Georeferencing for Map Library Collections. D-Lib Magazine, 18(11/12). https://doi.org/10.1045/november2012-fleet

Forum posters. (2010, present). Signs of OW addiction … Retrieved 11 April 2014, from Old Weather Forum » Shore Leave » Dockside Cafe website: http://forum.oldweather.org/index.php?topic=1432.0

Fugelstad, P., Dwyer, P., Filson Moses, J., Kim, J. S., Mannino, C. A., Terveen, L., & Snyder, M. (2012). What Makes Users Rate (Share, Tag, Edit…)? Predicting Patterns of Participation in Online Communities. Proceedings of the ACM 2012 Conference on Computer Supported Cooperative Work, 969–978. Retrieved from http://dl.acm.org/citation.cfm?id=2145349

Gilliver, P. (2012, October 4). ‘Your dictionary needs you’: A brief history of the OED’s appeals to the public. Retrieved from Oxford English Dictionary website: https://public.oed.com/history/history-of-the-appeals/

Goldstein, D. (1994). ‘Yours for Science’: The Smithsonian Institution’s Correspondents and the Shape of Scientific Community in Nineteenth-Century America. Isis, 85(4), 573–599.

Grayson, R. (2016). A Life in the Trenches? The Use of Operation War Diary and Crowdsourcing Methods to Provide an Understanding of the British Army’s Day-to-Day Life on the Western Front. British Journal for Military History, 2(2). Retrieved from http://bjmh.org.uk/index.php/bjmh/article/view/96

Hess, W. (2010, February 16). Onboarding: Designing Welcoming First Experiences. Retrieved 29 July 2014, from UX Magazine website: http://uxmag.com/articles/onboarding-designing-welcoming-first-experiences

Holley, R. (2009). Many Hands Make Light Work: Public Collaborative OCR Text Correction in Australian Historic Newspapers (No. March). Canberra: National Library of Australia.

Holley, R. (2010). Crowdsourcing: How and Why Should Libraries Do It? D-Lib Magazine, 16(3/4). https://doi.org/10.1045/march2010-holley

Holmes, K. (2003). Volunteers in the heritage sector: A neglected audience? International Journal of Heritage Studies, 9(4), 341–355. https://doi.org/10.1080/1352725022000155072

Kittur, A., Nickerson, J. V., Bernstein, M., Gerber, E., Shaw, A., Zimmerman, J., … Horton, J. (2013). The future of crowd work. Proceedings of the 2013 Conference on Computer Supported Cooperative Work, 1301–1318. Retrieved from http://dl.acm.org/citation.cfm?id=2441923

Lambert, S., Winter, M., & Blume, P. (2014, March 26). Getting to where we are now. Retrieved 4 March 2015, from 10most.org.uk website: http://10most.org.uk/content/getting-where-we-are-now

Lascarides, M., & Vershbow, B. (2014). What’s on the menu?: Crowdsourcing at the New York Public Library. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Latimer, J. (2009, February 25). Letter in the Attic: Lessons learnt from the project. Retrieved 17 April 2014, from My Brighton and Hove website: http://www.mybrightonandhove.org.uk/page/letterintheatticlessons?path=0p116p1543p

Lazy Registration design pattern. (n.d.). Retrieved 9 December 2018, from Http://ui-patterns.com/patterns/LazyRegistration website: http://ui-patterns.com/patterns/LazyRegistration

Leon, S. M. (2014). Build, Analyse and Generalise: Community Transcription of the Papers of the War Department and the Development of Scripto. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Mayer, R. E., & Moreno, R. (2003). Nine ways to reduce cognitive load in multimedia learning. Educational Psychologist, 38(1), 43–52.

McGonigal, J. (n.d.). Gaming the Future of Museums. Retrieved from http://www.slideshare.net/avantgame/gaming-the-future-of-museums-a-lecture-by-jane-mcgonigal-presentation#text-version

Mills, E. (2017, December). The Flitch of Bacon: An Unexpected Journey Through the Collections of the British Library. Retrieved 17 August 2018, from British Library Digital Scholarship blog website: http://blogs.bl.uk/digital-scholarship/2017/12/the-flitch-of-bacon-an-unexpected-journey-through-the-collections-of-the-british-library.html

Mitra, T., & Gilbert, E. (2014). The Language that Gets People to Give: Phrases that Predict Success on Kickstarter. Retrieved from http://comp.social.gatech.edu/papers/cscw14.crowdfunding.mitra.pdf

Mugar, G., Østerlund, C., Hassman, K. D., Crowston, K., & Jackson, C. B. (2014). Planet Hunters and Seafloor Explorers: Legitimate Peripheral Participation Through Practice Proxies in Online Citizen Science. Retrieved from http://crowston.syr.edu/sites/crowston.syr.edu/files/paper_revised%20copy%20to%20post.pdf

Mugar, G., Østerlund, C., Jackson, C. B., & Crowston, K. (2015). Being Present in Online Communities: Learning in Citizen Science. Proceedings of the 7th International Conference on Communities and Technologies, 129–138. https://doi.org/10.1145/2768545.2768555

Museums, Libraries and Archives Council. (2008). Generic Learning Outcomes. Retrieved 8 September 2014, from Inspiring Learning website: http://www.inspiringlearningforall.gov.uk/toolstemplates/genericlearning/

National Archives of Australia. (n.d.). ArcHIVE – homepage. Retrieved 18 June 2014, from ArcHIVE website: http://transcribe.naa.gov.au/

Nielsen, J. (1995). 10 Usability Heuristics for User Interface Design. Retrieved 29 April 2014, from http://www.nngroup.com/articles/ten-usability-heuristics/

Nov, O., Arazy, O., & Anderson, D. (2011). Technology-Mediated Citizen Science Participation: A Motivational Model. Proceedings of the AAAI International Conference on Weblogs and Social Media. Presented at the Barcelona, Spain. Barcelona, Spain.

Oomen, J., Gligorov, R., & Hildebrand, M. (2014). Waisda?: Making Videos Findable through Crowdsourced Annotations. In M. Ridge (Ed.), Crowdsourcing Our Cultural Heritage. Retrieved from http://www.ashgate.com/isbn/9781472410221

Paas, F., Renkl, A., & Sweller, J. (2003). Cognitive Load Theory and Instructional Design: Recent Developments. Educational Psychologist, 38(1), 1–4. https://doi.org/10.1207/S15326985EP3801_1

Part I: Building a Great Project. (n.d.). Retrieved 9 December 2018, from Zooniverse Help website: https://help.zooniverse.org/best-practices/1-great-project/

Preist, C., Massung, E., & Coyle, D. (2014). Competing or aiming to be average?: Normification as a means of engaging digital volunteers. Proceedings of the 17th ACM Conference on Computer Supported Cooperative Work & Social Computing, 1222–1233. https://doi.org/10.1145/2531602.2531615

Raddick, M. J., Bracey, G., Gay, P. L., Lintott, C. J., Murray, P., Schawinski, K., … Vandenberg, J. (2010). Galaxy Zoo: Exploring the Motivations of Citizen Science Volunteers. Astronomy Education Review, 9(1), 18.

Raimond, Y., Smethurst, M., & Ferne, T. (2014, September 15). What we learnt by crowdsourcing the World Service archive. Retrieved 15 September 2014, from BBC R&D website: http://www.bbc.co.uk/rd/blog/2014/08/data-generated-by-the-world-service-archive-experiment-draft

Reside, D. (2014). Crowdsourcing Performing Arts History with NYPL’s ENSEMBLE. Presented at the Digital Humanities 2014. Retrieved from http://dharchive.org/paper/DH2014/Paper-131.xml

Ridge, M. (2011a). Playing with Difficult Objects – Game Designs to Improve Museum Collections. In J. Trant & D. Bearman (Eds.), Museums and the Web 2011: Proceedings. Retrieved from http://www.museumsandtheweb.com/mw2011/papers/playing_with_difficult_objects_game_designs_to

Ridge, M. (2011b). Playing with difficult objects: Game designs for crowdsourcing museum metadata (MSc Dissertation, City University London). Retrieved from http://www.miaridge.com/my-msc-dissertation-crowdsourcing-games-for-museums/

Ridge, M. (2013). From Tagging to Theorizing: Deepening Engagement with Cultural Heritage through Crowdsourcing. Curator: The Museum Journal, 56(4).

Ridge, M. (2014, November). Citizen History and its discontents. Presented at the IHR Digital History Seminar, Institute for Historical Research, London. Retrieved from https://hcommons.org/deposits/item/hc:17907/

Ridge, M. (2015). Making digital history: The impact of digitality on public participation and scholarly practices in historical research (Ph.D., Open University). Retrieved from http://oro.open.ac.uk/45519/

Ridge, M. (2018). British Library Digital Scholarship course 105: Exercises for Crowdsourcing in Libraries, Museums and Cultural Heritage Institutions. Retrieved from https://docs.google.com/document/d/1tx-qULCDhNdH0JyURqXERoPFzWuCreXAsiwHlUKVa9w/

Rotman, D., Preece, J., Hammock, J., Procita, K., Hansen, D., Parr, C., … Jacobs, D. (2012). Dynamic changes in motivation in collaborative citizen-science projects. Proceedings of the ACM 2012 Conference on Computer Supported Cooperative Work, 217–226. https://doi.org/10.1145/2145204.2145238

Sample Ward, A. (2011, May 18). Crowdsourcing vs Community-sourcing: What’s the difference and the opportunity? Retrieved 6 January 2013, from Amy Sample Ward’s Version of NPTech website: http://amysampleward.org/2011/05/18/crowdsourcing-vs-community-sourcing-whats-the-difference-and-the-opportunity/

Schmitt, J. R., Wang, J., Fischer, D. A., Jek, K. J., Moriarty, J. C., Boyajian, T. S., … Socolovsky, M. (2014). Planet Hunters. VI. An Independent Characterization of KOI-351 and Several Long Period Planet Candidates from the Kepler Archival Data. The Astronomical Journal, 148(2), 28. https://doi.org/10.1088/0004-6256/148/2/28

Secord, A. (1994). Corresponding interests: Artisans and gentlemen in nineteenth-century natural history. The British Journal for the History of Science, 27(04), 383–408. https://doi.org/10.1017/S0007087400032416

Shakespeare’s World Talk #OED. (Ongoing). Retrieved 21 April 2019, from https://www.zooniverse.org/projects/zooniverse/shakespeares-world/talk/239

Sharma, P., & Hannafin, M. J. (2007). Scaffolding in technology-enhanced learning environments. Interactive Learning Environments, 15(1), 27–46. https://doi.org/10.1080/10494820600996972

Shirky, C. (2011). Cognitive surplus: Creativity and generosity in a connected age. London, U.K.: Penguin.

Silvertown, J. (2009). A new dawn for citizen science. Trends in Ecology & Evolution, 24(9), 467–71. https://doi.org/10.1016/j.tree.2009.03.017

Simmons, B. (2015, August 24). Measuring Success in Citizen Science Projects, Part 2: Results. Retrieved 28 August 2015, from Zooniverse website: https://blog.zooniverse.org/2015/08/24/measuring-success-in-citizen-science-projects-part-2-results/

Simon, N. K. (2010). The Participatory Museum. Retrieved from http://www.participatorymuseum.org/chapter4/

Smart, P. R., Simperl, E., & Shadbolt, N. (2014). A Taxonomic Framework for Social Machines. In D. Miorandi, V. Maltese, M. Rovatsos, A. Nijholt, & J. Stewart (Eds.), Social Collective Intelligence: Combining the Powers of Humans and Machines to Build a Smarter Society. Retrieved from http://eprints.soton.ac.uk/362359/

Smithsonian Institution Archives. (2012, March 21). Meteorology. Retrieved 25 November 2017, from Smithsonian Institution Archives website: https://siarchives.si.edu/history/featured-topics/henry/meteorology

Springer, M., Dulabahn, B., Michel, P., Natanson, B., Reser, D., Woodward, D., & Zinkham, H. (2008). For the Common Good: The Library of Congress Flickr Pilot Project (pp. 1–55). Retrieved from Library of Congress website: http://www.loc.gov/rr/print/flickr_report_final.pdf

Stebbins, R. A. (1997). Casual leisure: A conceptual statement. Leisure Studies, 16(1), 17–25. https://doi.org/10.1080/026143697375485

The Culture and Sport Evidence (CASE) programme. (2011). Evidence of what works: Evaluated projects to drive up engagement (No. January; p. 19). Retrieved from Culture and Sport Evidence (CASE) programme website: http://www.culture.gov.uk/images/research/evidence_of_what_works.pdf

Trant, J. (2009). Tagging, Folksonomy and Art Museums: Results of steve.museum’s research (p. 197). Retrieved from Archives & Museum Informatics website: https://web.archive.org/web/20100210192354/http://conference.archimuse.com/files/trantSteveResearchReport2008.pdf

United States Government. (n.d.). Federal Crowdsourcing and Citizen Science Toolkit. Retrieved 9 December 2018, from CitizenScience.gov website: https://www.citizenscience.gov/toolkit/

Van Merriënboer, J. J. G., Kirschner, P. A., & Kester, L. (2003). Taking the load off a learner’s mind: Instructional design for complex learning. Educational Psychologist, 38(1), 5–13.

Vander Wal, T. (2007, February 2). Folksonomy. Retrieved 8 December 2018, from Vanderwal.net website: http://vanderwal.net/folksonomy.html

Veldhuizen, B., & Keinan-Schoonbaert, A. (2015, February 11). MicroPasts: Crowdsourcing Cultural Heritage Research. Retrieved 8 December 2018, from Sketchfab Blog website: https://blog.sketchfab.com/micropasts-crowdsourcing-cultural-heritage-research/

Verwayen, H., Fallon, J., Schellenberg, J., & Kyrou, P. (2017). Impact Playbook for museums, libraries and archives. Europeana Foundation.

Vetter, J. (2011). Introduction: Lay Participation in the History of Scientific Observation. Science in Context, 24(02), 127–141. https://doi.org/10.1017/S0269889711000032

von Ahn, L., & Dabbish, L. (2008). Designing games with a purpose. Communications of the ACM, 51(8), 57. https://doi.org/10.1145/1378704.1378719

Wenger, E. (2010). Communities of practice and social learning systems: The career of a concept. In Social Learning Systems and communities of practice. Springer Verlag and the Open University.

Whitenton, K. (2013, December 22). Minimize Cognitive Load to Maximize Usability. Retrieved 12 September 2014, from Nielsen Norman Group website: http://www.nngroup.com/articles/minimize-cognitive-load/

WieWasWie Project informatie. (n.d.). Retrieved 1 August 2014, from VeleHanden website: http://velehanden.nl/projecten/bekijk/details/project/wiewaswie_bvr

Willett, K. (n.d.). New paper: Galaxy Zoo and machine learning. Retrieved 31 March 2015, from Galaxy Zoo website: http://blog.galaxyzoo.org/2015/03/31/new-paper-galaxy-zoo-and-machine-learning/

Wood, D., Bruner, J. S., & Ross, G. (1976). The role of tutoring in problem solving. Journal of Child Psychology and Psychiatry, and Allied Disciplines, 17(2), 89–100.